Social media’s protected status goes on trial

Does a 1996 law shield tech companies from the consequences of their own recommendations?

Full access isn’t far.

We can’t release more of our sound journalism without a subscription, but we can make it easy for you to come aboard.

Get started for as low as $3.99 per month.

Current WORLD subscribers can log in to access content. Just go to "SIGN IN" at the top right.

LET'S GOAlready a member? Sign in.

This past week the Supreme Court heard arguments in a pair of cases alleging that YouTube and Twitter should be held responsible for two separate ISIS attacks because they recommended ISIS recruitment videos to users. The cases center on a potential conflict between the algorithms these platforms use to monetize the internet and a 1996 law known as Section 230 of the Communications Decency Act, which lower courts have said provides near-complete civil immunity for third-party content posted to their platforms. Statements made during oral arguments suggested that the Supreme Court may be poised to cut back on that legal protection, but that it is unlikely to issue a broad ruling.

In the underlying case against Google, Nohemi Gonzalez, a 23-year-old college student studying abroad in France was killed at a Paris bistro in 2015 as part of coordinated ISIS attacks that killed 129 people. Her family brought suit alleging that YouTube (owned by Google) aided ISIS’s recruitment by knowingly allowing ISIS to post recruitment videos and by recommending the videos to susceptible individuals.

The lower federal courts threw the lawsuit out, finding that Section 230 shielded the company from liability. Section 230, a short provision referred to as the “26 words that created the internet,” provides that no internet service provider “shall be treated as the publisher or speaker of any information” provided by a third party.

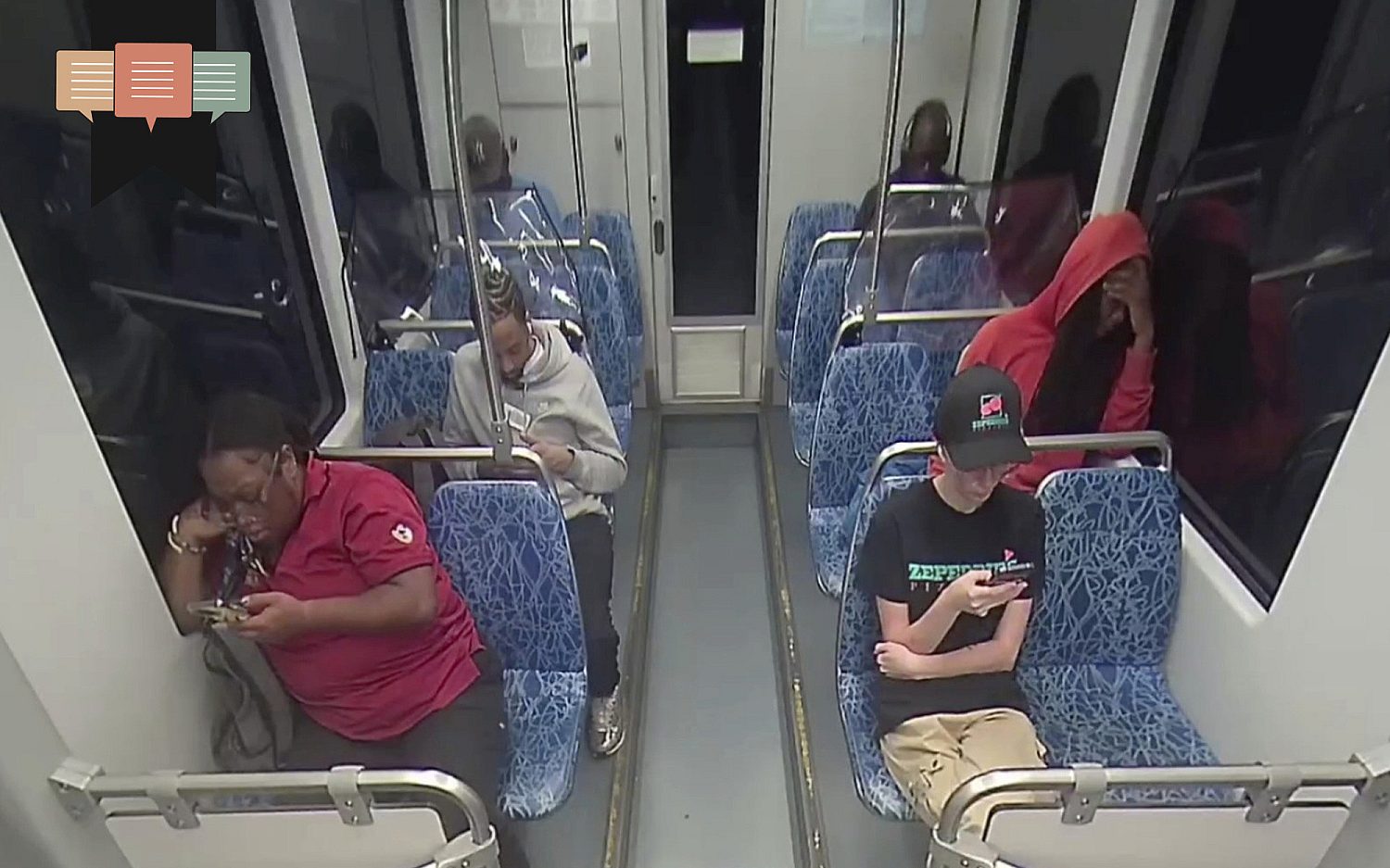

It is no secret that internet platforms make money by selling ads. Algorithms are a big part of the strategy these companies use to keep users scrolling. The Wall Street Journal recently reported that 70 percent of the one billion hours spent on YouTube daily was driven by the company’s recommendations. And studies have shown that the algorithms are addictive and drive users to increasingly more extreme content. In fact, a recent study of violent, graphic, and other objectionable reported videos concluded that 71 percent of videos on YouTube had been seen because they were recommended by the site itself.

Further, the New York Times recently published an internal TikTok document explaining that the twin goals of retention and time meant that TikTok would push “sad content that could induce self-harm” to people struggling with mental health. As one federal judge has noted, “mounting evidence suggests that providers designed their algorithms to drive users toward content and people the users agreed with—and that they have done it too well, nudging susceptible souls ever further down dark paths.”

The Supreme Court agreed to review these cases to determine (among other things) whether Section 230 shields internet platforms when they use algorithms to recommend content.

Plaintiffs argue that internet platforms are not protected by Section 230 when recommendations are generated by an internet platform. The Biden administration agrees in part, arguing that YouTube can be held liable “for its own conduct and its own communications” such as automatic-play video suggestions which tell a user he “will be interested in” the video.

Big Tech argues that Section 230 immunizes internet platforms from civil liability for any conduct it undertakes as a “publisher,” including organizing and recommending content. The federal courts have largely agreed, even though this means internet companies may not be held liable even if their algorithms recommend ISIS videos to terrorists, body-shaming videos to teenage girls with eating disorders, or how-to videos to the depressed and suicidal.

At oral argument, several justices expressed concerns over the breadth of Google’s arguments. Justice Barrett, for example, worried about immunizing platforms that recommend terrorism. Justice Sotomayor was similarly concerned by the algorithm's recommendation function noting that it could be used to discriminate based on race. Justice Kagan wondered why the “tech industry gets a pass” from liability, and Justice Alito asked why they should be protected for “posting and refusing to take down videos that it knows are defamatory and false.” Justice Jackson focused on the original purpose of Section 230, noting that Google was arguing for the right to promote “offensive material,” which was “exactly the opposite of what Congress was trying to do” in Section 230.

On the other hand, several justices worried about the institutional competence of federal courts to police the billions of searches and recommendations generated daily on the internet. They noted that nonstop litigation was possible absent a Section 230 shield.

All told, the oral argument revealed a Court that was uncomfortable with the broad civil immunity for deliberately false or harmful material proposed by Google, but also very aware of its institutional limitations. Justice Elena Kagan seemed to speak for all of the justices when she described the Supreme Court bench as “not, like, the nine greatest experts on the internet.”

Justice Kagan’s statement, honest as it is, points to the complexity of an issue that will remain a challenge to the government. Even if the Supreme Court limits Section 230, it is very likely that the deleterious and harmful effects of algorithms will remain an issue for Congress to debate.

These daily articles have become part of my steady diet. —Barbara

Sign up to receive the WORLD Opinions email newsletter each weekday for sound commentary from trusted voices.Read the Latest from WORLD Opinions

R. Albert Mohler Jr. | Response to release of security video shows deep division between liberals and conservatives

Barton J. Gingerich | The deeply rooted problem with a convert to Roman Catholicism administering the Lord’s Supper in a PCA church

Nathan Leamer | We should view artificial intelligence as a potential opportunity for the Church

Joe Rigney | Slayings in Charlotte, Minneapolis, and Nashville reveal a deep spiritual abyss in America

Please wait while we load the latest comments...

Comments

Please register, subscribe, or log in to comment on this article.