Concerned about digital platforms?

The proposed Section 230 “reform” will create huge problems for free speech

“Section 230,” the federal law that provides digital platforms immunity for the content shared by their users, is the law that seemingly everyone loves to hate. Both President Trump and President Biden vowed to repeal Section 230, and the Atlantic calls it “the law that ruined the Internet.” But the recent proposed overhaul put forth by Democrats creates enormous new power for the government to define acceptable speech. Needless to say, that would threaten significant danger for religious organizations and the broader culture of free and open debate.

Section 230 is a small but powerful part of the 1996 Communications Decency Act (much of which has actually been found unconstitutional in the past several decades). With one phrase, it prohibits digital platforms from facing legal liability for content shared by users of their platforms: “No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.”

This immunity is not unlimited, and platforms can be held liable for hosting content that violates federal laws or (as amended in 2018) federal or state sex trafficking laws. But recently, there has been widespread support to weaken this immunity further, especially as a groundbreaking Wall Street Journal investigation of Facebook revealed its contribution to body-image issues, suicide rates, and pornographic content among teens.

On Oct. 13, House Democrats introduced a new bill to modify Section 230 with a provision that exposes digital platforms to legal liability if they knowingly or recklessly use personalized algorithms to recommend information that “materially contributed to a physical or severe emotional injury to any person.” (The bill includes an exception for any platforms with fewer than five million unique monthly visitors.)

At first glance, the proposal seems fair enough. Recent articles have highlighted the danger of Facebook’s algorithms creating an echo chamber of fake news or YouTube’s algorithms leading users down a “rabbit hole” of conspiracy theories. However, this seemingly straightforward phrase marks a dramatic shift in the way the Internet works and creates enormous new power for government regulators to define which speech could cause “severe emotional injury.” Moreover, it’s not hard to imagine how broadly regulators could interpret this to minimize any controversial opinions, including closely held religious beliefs, and restrict the ability of religious organizations to reach new communities.

For example, under the new law, the algorithms may need to block supportive messages about Texas’ recent abortion ban because opponents argue that the law creates “vast psychological consequences.” And couldn’t a sermon video on heaven and hell cause severe emotional injury to someone who doesn’t believe in God? Maybe the algorithms should just stop amplifying religious content altogether since a recent The New Republic article (titled “Can Religion Give You PTSD?”) quotes a psychologist who argues that “all religions, especially patriarchal religions, have an overwhelming negative effect.” That’s the kind of argument we will surely face.

Even the definition of “physical injury” could be ambiguous as the definition of “violent content” has expanded beyond portraying or advocating direct, physical harm. Jonathan Haidt and Greg Lukianoff highlight a disturbing trend among college students declaring “speech is violence,” and the pandemic has shown us that any reasonable debate about the impact of COVID-19 restrictions can be silenced under the guise of “saving lives.” YouTube already removes scientific discussions contrasting the costs and benefits of masking young children simply because it “contradicts the consensus of local and global health authorities.” Under this new law, tech platforms might also decide that any disagreement with the government’s COVID restrictions should not be amplified, for fear of being liable for “physical injury.” (Even though the Supreme Court found last fall that New York City’s gathering restrictions “single out houses of worship for especially harsh treatment.”)

To define what content causes “physical or severe emotional injury” will require new guidelines from regulators, government lawyers, and judges who will spend thousands of hours determining which speech violates the new law. “Establishing an unprecedented set of new speech laws and adequately resolving interpretive disputes would require administrative capacity well beyond that of any current U.S. regulator,” argues Daphne Keller, a legal expert in digital platform regulation. That means ever increasing government regulation.

Roddy Lindsay, a former Facebook data scientist, suggests that the Democratic-led reform to Section 230 will result in Facebook and other platforms removing personalized algorithms altogether, voluntarily eliminating a massive driver of user engagement. Instead, it’s more likely the platforms will simply stop amplifying any content the government decides is too offensive. And that’s something that should concern us all.

These daily articles have become part of my steady diet. —Barbara

Sign up to receive the WORLD Opinions email newsletter each weekday for sound commentary from trusted voices.Read the Latest from WORLD Opinions

R. Albert Mohler Jr. | The assassination of Charlie Kirk and the call of a generation

Erick Erickson | Angry conservatives should remember the young leader’s words about faith

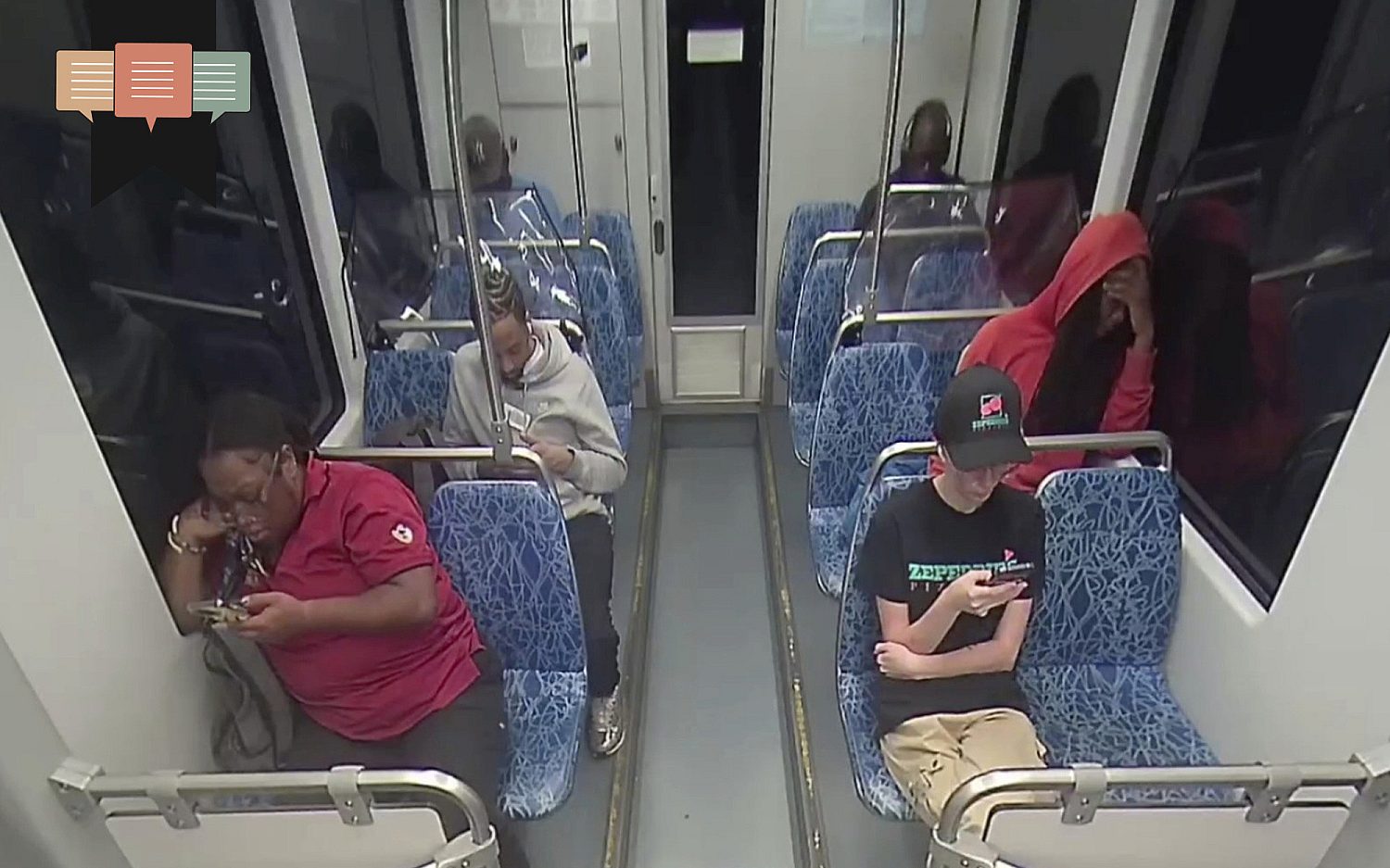

R. Albert Mohler Jr. | Response to release of security video shows deep division between liberals and conservatives

Barton J. Gingerich | The deeply rooted problem with a convert to Roman Catholicism administering the Lord’s Supper in a PCA church

Please wait while we load the latest comments...

Comments

Please register, subscribe, or log in to comment on this article.